AI agents are quickly moving from experimental demos into real business workflows. They can plan tasks, use software tools, call APIs, summarize data, write code, monitor systems, and trigger actions with less human input than traditional chatbots. That power is useful, but it also changes the security model. In 2026, the important question is no longer only “what can an AI agent do?” It is also “what should an AI agent be allowed to do?”

Recent technology news and enterprise security discussions show a clear trend: organizations want autonomous AI tools, but they are also worried about agentic attacks, identity misuse, sensitive data exposure, and weak governance. For Muawia Tech readers, the practical lesson is simple: AI agents should be treated like powerful digital workers with identities, permissions, monitoring, and limits.

What Are AI Agents?

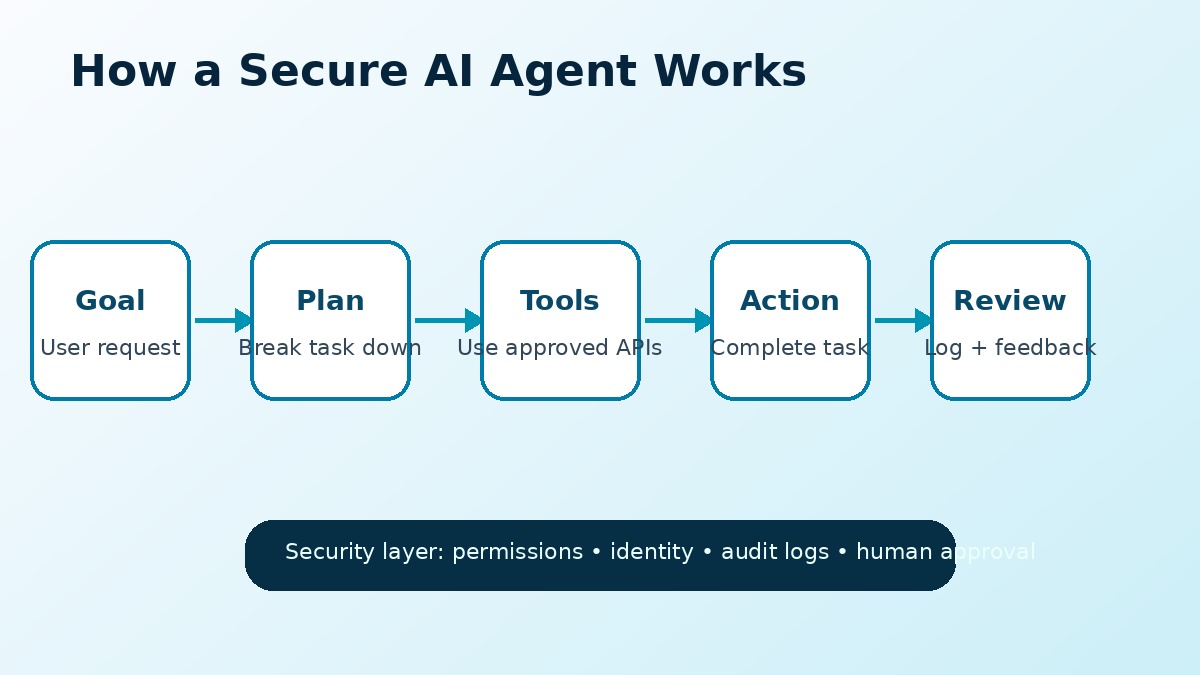

AI agents are software systems that use artificial intelligence to understand a goal, decide what steps are needed, use tools, and complete tasks. A normal chatbot usually waits for a user prompt and returns an answer. An AI agent can go further by taking action across applications, databases, cloud services, ticketing tools, email, code repositories, or security platforms.

For example, a customer-support agent may read a request, check the customer record, search documentation, draft a reply, update a ticket, and escalate the case if needed. A cybersecurity agent may review alerts, correlate logs, enrich suspicious IP addresses, and recommend a response. A business automation agent may prepare a weekly report, pull numbers from dashboards, and send the result to a team.

Why Securing AI Agents Matters in 2026

The shift from chatbots to AI agents creates a bigger risk surface. If a chatbot gives a wrong answer, a person may notice before acting. If an AI agent has permission to use tools, send messages, modify files, or access data, a mistake or malicious instruction can become an operational problem.

Security teams are especially focused on agentic AI because agents combine several sensitive areas at once: identity, automation, data access, third-party integrations, and decision-making. A poorly configured agent can behave like an over-permissioned employee account that never sleeps and can act at machine speed.

Common AI Agent Security Risks

Before deploying autonomous AI tools, businesses should understand the most common risks. These risks do not mean organizations should avoid AI agents completely. They mean agents need clear boundaries and practical controls.

1. Over-Permissioned Access

The biggest mistake is giving an AI agent broad access “just in case” it needs something later. If an agent only needs to read support tickets, it should not have permission to delete records, export customer databases, or change billing settings. Least privilege is essential.

2. Sensitive Data Exposure

AI agents often need context to work well. That context may include emails, files, customer details, logs, contracts, or source code. If data boundaries are unclear, the agent may process or share information that should stay private.

3. Prompt Injection and Malicious Instructions

Prompt injection happens when hidden or malicious instructions influence the agent’s behavior. For example, an agent browsing a web page or reading a document may encounter instructions that try to override the original task. Agents should not blindly trust every piece of text they read.

4. Unapproved Actions

Some actions should require human approval. Sending external emails, deleting data, changing access permissions, publishing content, transferring money, or modifying production systems should not happen automatically unless the risk is well controlled.

5. Weak Audit Trails

If an AI agent makes a change, teams must be able to answer: what happened, when did it happen, what data was used, which tool was called, and who approved it? Without logs, troubleshooting and accountability become difficult.

AI Agent Security Checklist

The following checklist gives technology leaders, small businesses, and security teams a practical starting point for safer AI automation.

1. Give Every Agent a Clear Identity

Each AI agent should have its own account, token, or service identity. Avoid sharing a human user’s credentials. A separate identity makes it easier to limit permissions, monitor activity, rotate access, and disable the agent if something goes wrong.

2. Use Least Privilege Permissions

Start with the minimum permissions required for the agent’s task. Add access only when there is a clear business need. Review permissions regularly, especially when an agent’s role changes.

3. Separate Read Actions from Write Actions

Reading data is usually lower risk than changing data. A research agent may only need read access. A publishing, finance, or operations agent may need write access, but those actions should be more tightly controlled.

4. Require Human Approval for High-Risk Tasks

Human-in-the-loop approval is still one of the strongest controls. Use approvals for external communication, production changes, financial actions, account management, legal content, or any workflow where a mistake could cause serious damage.

5. Limit the Data the Agent Can See

Agents should not automatically receive full access to all company data. Use data classification, scoped folders, filtered APIs, and role-based access to keep sensitive information out of unnecessary workflows.

6. Log Tool Calls and Decisions

Record prompts, tool calls, API responses, approval decisions, and final actions where privacy rules allow. Logs help teams investigate incidents, improve prompts, and prove that controls are working.

7. Test Agents Before Production

Run agents in a sandbox environment before connecting them to real systems. Test normal tasks, edge cases, malicious instructions, unexpected files, broken APIs, and permission failures.

8. Monitor for Unusual Behavior

AI agent monitoring should include unusual login locations, abnormal API usage, unexpected data exports, repeated failed actions, and attempts to access systems outside the agent’s role.

Where AI Agents Can Help Businesses Safely

When deployed carefully, AI agents can be valuable in many areas. The safest early use cases are usually low-risk, reversible, and easy to review.

- Research and summarization: collecting information, summarizing documents, and preparing briefs.

- Customer support assistance: drafting replies while a human approves the final response.

- Security alert enrichment: gathering context for analysts without automatically blocking accounts or deleting files.

- Internal reporting: preparing dashboards, summaries, and weekly updates from approved data sources.

- Content workflow support: drafting outlines, checking SEO elements, and preparing editorial assets for review.

For more technology and AI coverage, readers can explore the Artificial Intelligence section on Muawia Tech. Businesses also deploying cloud tools should follow safe infrastructure practices in the Cloud category.

Best Practices for Small Businesses

Small businesses do not need a complex enterprise security program to start safely. They can begin with practical rules: use separate accounts, connect only necessary tools, avoid sensitive customer data in early tests, require approval before external actions, and review logs weekly.

It is also wise to document what each agent is allowed to do. A simple one-page policy can define the agent’s purpose, connected systems, data access, approval requirements, and owner. This prevents “automation drift,” where a tool slowly gains more access without proper review.

The Future of AI Agent Governance

As AI agents become common, governance will become a competitive advantage. Companies that can safely deploy agents will automate faster without losing control. Companies that ignore identity, access, and monitoring may face data leaks, compliance issues, and operational mistakes.

The next generation of AI platforms will likely include stronger built-in controls: agent identities, policy engines, permission scopes, action approvals, safer browser/tool use, and better audit logs. Until then, organizations should design their own guardrails before giving agents access to important systems.

FAQ About Securing AI Agents

What is an AI agent?

An AI agent is an artificial intelligence system that can understand a goal, plan steps, use tools, and take actions across software systems with some level of autonomy.

Are AI agents safe for business use?

AI agents can be safe when they are limited by clear permissions, human approval, data boundaries, monitoring, and audit logs. They become risky when they have broad access without oversight.

What is the biggest security risk with AI agents?

The biggest risk is over-permissioned access. If an agent can use too many tools or see too much data, a mistake or malicious instruction can cause significant harm.

Should AI agents have their own accounts?

Yes. Each AI agent should have its own identity or service account so its permissions and activity can be controlled, monitored, and revoked separately.

How can companies start using AI agents safely?

Start with low-risk workflows, use least privilege, keep humans in the approval loop for important actions, test in a sandbox, and monitor all tool calls and outputs.

Conclusion

AI agents are becoming one of the most important automation trends of 2026. They can save time, improve workflows, and help teams work faster. But because they can also access tools and take action, they need stronger security controls than ordinary chatbots.

The safest path is to treat AI agents like digital workers: give them clear identities, limited permissions, approved tools, monitored actions, and human oversight for sensitive decisions. With the right governance, businesses can benefit from AI agents without creating unnecessary cybersecurity risk.